It is often difficult to get the right trade-off between image quality and loading speed.

The ideal solution would be for each image to be adapted on a user-by-user basis: be that clipping it perfectly to the user’s device screen, stripping metadata of any unnecessary information or, of course, ensuring that it is displayed with optimum image quality. But achieving this soon becomes something of a black art.

What are the techniques, best practices, best libraries and best algorithms for image compression? An overview of the image optimizations you can take advantage of with our engine, for light and fast pages, and a better user experience regardless of the browser and screen size.

First and foremost, a key thing to note is that there are two types of compression:

- lossless compression: this reduces the data size or weight of the image, without any deterioration of quality. This essentially involves removing redundant information.

- lossy compression: this uses algorithms based on an analysis of human perception to re-encode the image in a form that requires less data. Deterioration of image quality is possible.

The weight and the importance of images on a web page

Images make up almost half of your page weight. They therefore have a direct impact on how fast your web site loads

.

(source HTTP Archive)

(source HTTP Archive)

So if you wish to maintain optimum performance, image optimisation is a must.

However, image optimisation is also an extremely complex affair given the huge variety of image formats used on web sites nowadays: JPG, GIF, SVG, PNG, WebP, AVIF …

One of the perks of our frontend optimization engine is that it allows you to intelligently compress images by taking advantage of new generation formats (WebP and AVIF) as recommended by Google in the Lighthouse and PageSpeed Insights audits, but also to automatically resize them to fit the screen size of your users.

In particular, we apply to web images:

- on-the-fly selection of different algorithms: MozJPEG, PNGquant, PNGCRUSH and Giflossy;

- Mean Pixel Error based analysis to automatically determine the right compression settings to use to provide optimum quality/weight ratios;

- image display techniques tailored to the page in question: lazy loading, inlining and progressive rendering.

The best compression algorithms to opitimize images

Image compression is an inevitable part of web performance optimisation, and it’s is often the first ont to deliver a performing web site. Here, then, are the tools that we have chosen to incorporate into our engine:

JPEG compression: MozJPEG and MPE analysis

JPEG is a format that is frequently used on web sites. It is particularly suited to photographs.

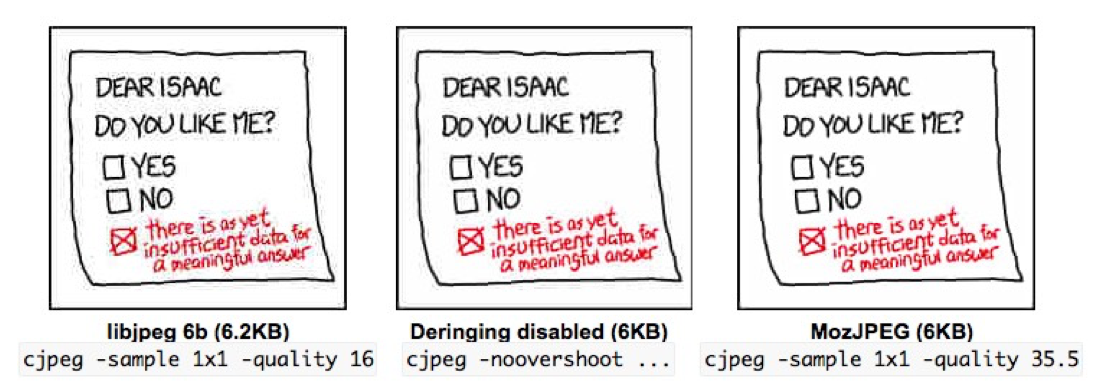

- MozJPEG, a recognised library

MozJPEG has become the de facto library for JPEG compression. Released in 2014, it has brought a significant improvement to image quality over existing libraries. The way it processes text also leads to a perceptible decrease in the grey “halo” effect that often plagues images containing text.

- MPE analysis for optimum quality/size ratios

JPEG compression can prove tricky when it comes to setting the quality parameter, which can be set to any value between 40 and 100. The parameter defaults to 80, offering reasonable quality/size ratios. However, we have found that choosing the right setting is particularly tricky for our customers, with the optimum setting varying from image to image. We have developed a solution that will automatically determine the settings to use to obtain an optimal trade-off between image quality and data size. To do so, our system uses an image comparison algorithm based on characteristics of the human visual system (MPE or Mean Pixel Error). The algorithm allows different quality settings to be tested from lowest to highest, checking that the compressed image does not exhibit any visible artefacts. Simple but effective: image weight is generally reduced by 30-70%.

PNG compression: PNGquant and PNGCRUSH

PNG is a format that is ideal for drawings, illustrations and pictures with a transparent background. The type of compression used for PNG files is usually lossless compression, which works by removing redundant information. We use a library called PNGCrush. It is also possible to convert PNG files using 24 or 32 bit colours into PNG files using 8 bit colours.

This conversion reduces file size considerably (up to 70%) and retains alpha transparency. The images generated are, as you might expect, compatible with all modern browsers.

When using this technique, there are various algorithms that can also be used to compress the image while retaining optimum visual image quality.

GIF compression: Giflossy

GIF format is mainly used for animation. Such images can therefore grow quickly in size. To counteract this, we use Giflossy.

Giflossy is an image encoder based on LZW compression. This involves indexing and then storing by reference long sequences of pixels that occur multiple times. In this way, instead of storing the pixel values multiple times, the actual sequence only needs to be stored once.

This offers a 30-50% reduction in the size of animated image files, in exchange for a slight loss in quality.

SVG compression: SVGO

SVG files (especially those exported by a number of editors) often contain a large amount of redundant and unnecessary information (such as editor metadata, comments, cached items, etc.).

In order to reduce file size, the SVGO library removes and/or compresses all of this data, without affecting the quality of the image. This optimisation is particularly worthwhile when optimising CSS sprites.

Image display techniques tailored to the web page in question

In addition the work we do to compress the image file, we also perform additional tasks around image loading.

Lazyloading

Only those images that are actually visible will be loaded initially. The remaining images are loaded once the user begins to scroll the page. Our script is not run on the first few images on the page in order to take advantage of the browser’s lookahead capability.

Inlining

We embed small images directly into the web page HTML. With fewer requests, this improves loading times when the page is initially viewed, particularly on mobile devices.

Progressive rendering

Without progressive rendering, JPEG images load from top to bottom. With a progressive JPEG, a low-quality version of the image is displayed straight away. Details then appear as the image is loaded. A smart algorithm is used to apply progressive compression to large images only.

Note that modern browsers may display JPEG images more quickly if they are generated using progressive compression.

Automatic image resizing and cropping

Like the weight of images, the adaptation of dimensions to the size of the screen is essential for the quality of the user experience (and also to avoid loading unnecessary bytes). That’s why Fasterize facilitates the implementation of responsive images, and allows to resize and crop images automatically.

To do this, our tool allows you to define the dimensional attributes of an image in the URL (ratio height / width) so that it adapts correctly to the size of the screen for each user. It is thus possible to resize, but also to change the orientation of the image, and even to move it in the page without using CSS (our engine then takes care of resizing the image automatically).

Today’s web is hugely bloated and largely to blame are its plethora of images in all shapes and sizes. These are images that are either not optimised or, where they are, are not optimised effectively.

So, for a high-performance website, pages that display quickly for all your mobile and desktop users, and consequently engagement and conversions that take off, image compression is a mandatory step!

Discover the impact of image compression

on your performance thanks to our engine: