There are two methods to measure web performance: Synthetic Monitoring (which is used for Lab data in Google tools) and Real User Monitoring (which is used for Field data in Google tools). Often opposed, they complete each other.

In this article, let’s take a look at these two methods for web performance monitoring. What are they about? What are they used for? How to use them effectively? What do you need to know to use them properly?

Synthetic Monitoring (aka Active monitoring)

The tests are executed :

- on servers from datacenters

- using a bridged connection

- to simulate the conditions that an average user might encounter under the conditions defined for the test.

Pages are actually loaded by a browser, in order to collect performance data corresponding to the reality of the user experience according to the parameters set up for the test:

- Browser

- Device type (mobile or desktop)

- Device model

- Geographic area

- Network quality…

The perks of Synthetic Monitoring

- Control of test conditions

- Possibility to test the same scenario several times and to compare the results before / after optimization

- Identification of problems encountered by a category of users (in certain geographical areas, on certain types of devices or browsers…)

Examples of tools that can be used for Synthetic Monitoring

Data collected with Synthetic Monitoring

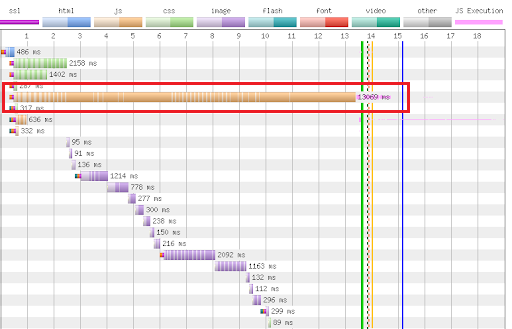

Waterfall

It is a graphical representation that usually takes the form of a waterfall, and that allows to visualize the loading of the different elements on a page. This graph is particularly interesting to identify blocking elements during loading (images, scripts, style sheets, fonts…).

WebPageTest Warterfall showing a resource that strongly

slows down the loading of the page, circled in red

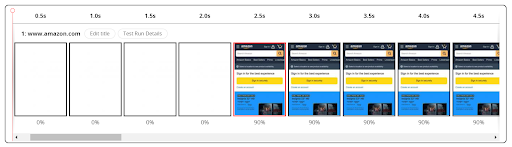

Filmstrips

Presented as a series of frames (or screens) on a timeline, it is used to visualize what the Internet user sees at each stage of the loading of a page, and how it is composed in the browser.

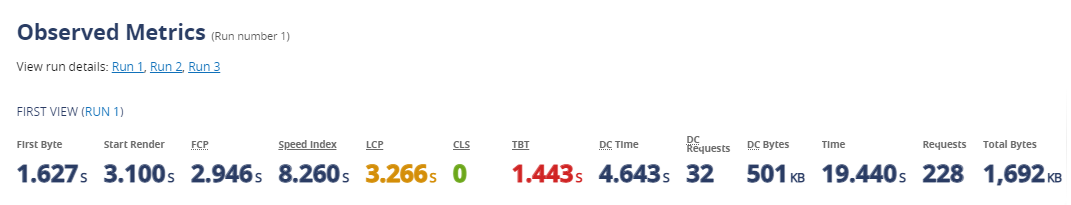

Technical data

The number of requests, the weight of the page, the server response time (Time To First Byte)…

Metrics related to page display and rendering

… and more generaly linked to user experience: Speed Index, Start Render, Core Web Vitals such as Cumulative Layout Shift, Largest Contentful Paint…

When to use Synthetic Monitoring

For technical benchmark

Synthetic Monitoring can be used to evaluate a web site performance in a given browsing context, and compare it to the market or to competitors. For example, it allows you to answer the question: “Is my site faster or slower than my main competitor’s in UK, in 4G on a mid-range cell phone…?

For Performance budget monitoring

The Performance budget consists in defining scores to be reached and/or thresholds not to be exceeded, for example: a PageSpeed score of 90 minimum, dividing the Speed Index by 10, limiting the weight of the pages to 1Mb…

Taking measurements at regular intervals under identical conditions allows you to evaluate progress and regression, and to measure the impact of the optimizations deployed.

For SPOF (Single Point Of Failure) reporting

Synthetic Monitoring tools can accurately identify slowdowns, including visualization features, to pinpoint resources that may be causing problems during page load and degrading the user experience (as shown in the WebPageTest waterfall above).

Real User Monitoring (aka Passive monitoring)

RUM data is collected continuously, and not at a moment in time as with Synthetic Monitoring, thanks to a script that measures the loading times.

The perks of Real User Monitoring

- Analysis of the site traffic and taking into account the actions of real users

- Continuous measurement

- Takes into account all users, all browsing conditions and geographical areas (no conditions to be defined before launching a test)

Examples of tools that enable Real User Monitoring

Data collected through Real User Monitoring

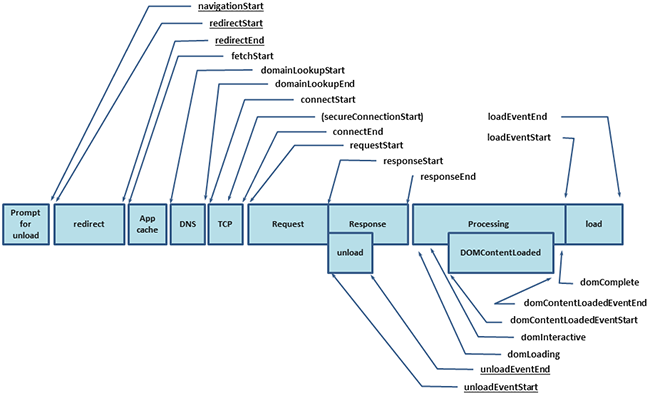

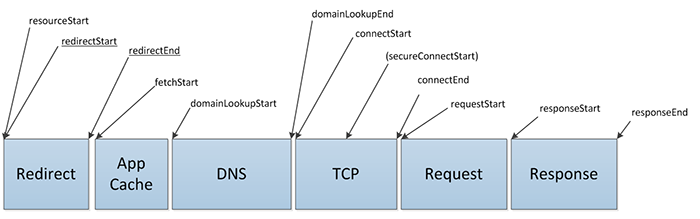

- Navigation Timing: RUM tools take advantage of the Navigation Timing API. Here is the set of data available as a page is loaded:

- Resource Timing: this API allows to measure in detail the loading time of each static resource.

- User Timing: the User Timing API allows to mark and measure specific events in the browser.

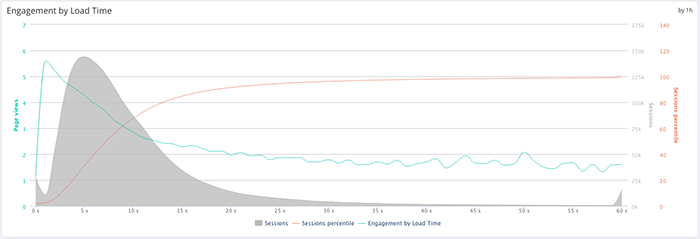

- Conversion rates and other business data: some RUM tools allow to correlate loading time and conversion rate. This is possible with Google Analytics, or Quanta.

In summary, Synthetic Monitoring allows you to follow the evolution of your performance over time and to position yourself in relation to your competitors, while RUM allows you to observe the experience of your customers on the ground.

As we said in the introduction, these methods are complementary.

Note: since data collection method is different, so are the results! Here are some tips for interpreting the data correctly.

Web performance monitoring: what you need to know to understand the results

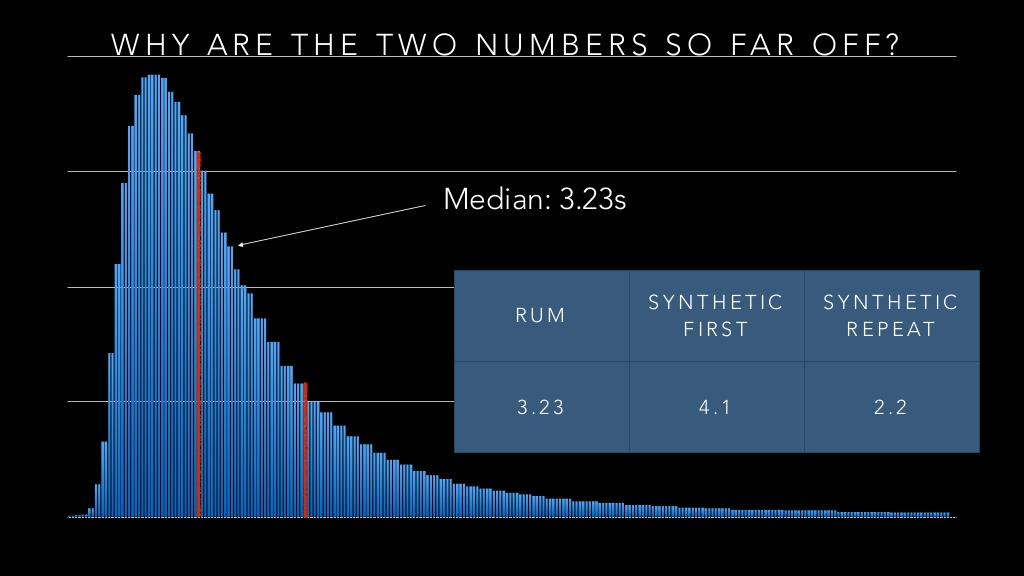

Between Synthetic and Real User Monitoring, the results are difficult to compare, especially since they can be very different. Why is this?

Apart from the data collection conditions, it is also due to the fact that RUM tools combine first view and repeat view data in very different contexts, because the real user base can be very heterogeneous.

In other words, the metrics come from a mix of those collected on pages viewed for the first time, and those collected on pages during a revisit with data from a cache (and therefore potentially faster).

On the other side, on a Synthetic Monitoring tool, the measurements are generally made on a first view in a unique and homogeneous context.

Consequently, as shown in this graph, the loading times indicated for the same page can be different:

You must therefore choose the data and the collection context according to what you want to illustrate. Indeed, the loading speed cannot be summarized in a single figure!

Each KPI has its own interest, and the advantage of this plurality is to be able to speak to all the professions: the E-commerce teams will be sensitive to business gains, while the SEO teams will be able to set goals to optimize Core Web Vitals and PageSpeed score; as for the technical teams, they will be able to focus on the metrics to be improved in order to relieve the infra.

Everyone at their level has their own benchmarks and requirements, and for a high-performance site, all teams have a role to play.

What metrics should you track and improve?

Discover the essential web performance KPIs to know: